Part 05 – Metrics

Should we not get away from numbers that we calculate to compare each other?

I guess, that most researchers will agree that the journal impact factor (JIF) is not a very useful tool for measuring scientific quality or output. However, besides the JIF, there are also other metrics that in various ways are trying to quantify the work that we produce. The question I am asking at the end of this Black Box Science episode is, whether we should try to identify the “good” (useful) metrics or whether we should get completely away from trying to find ways of translating scientific publications into numbers that we can compare.

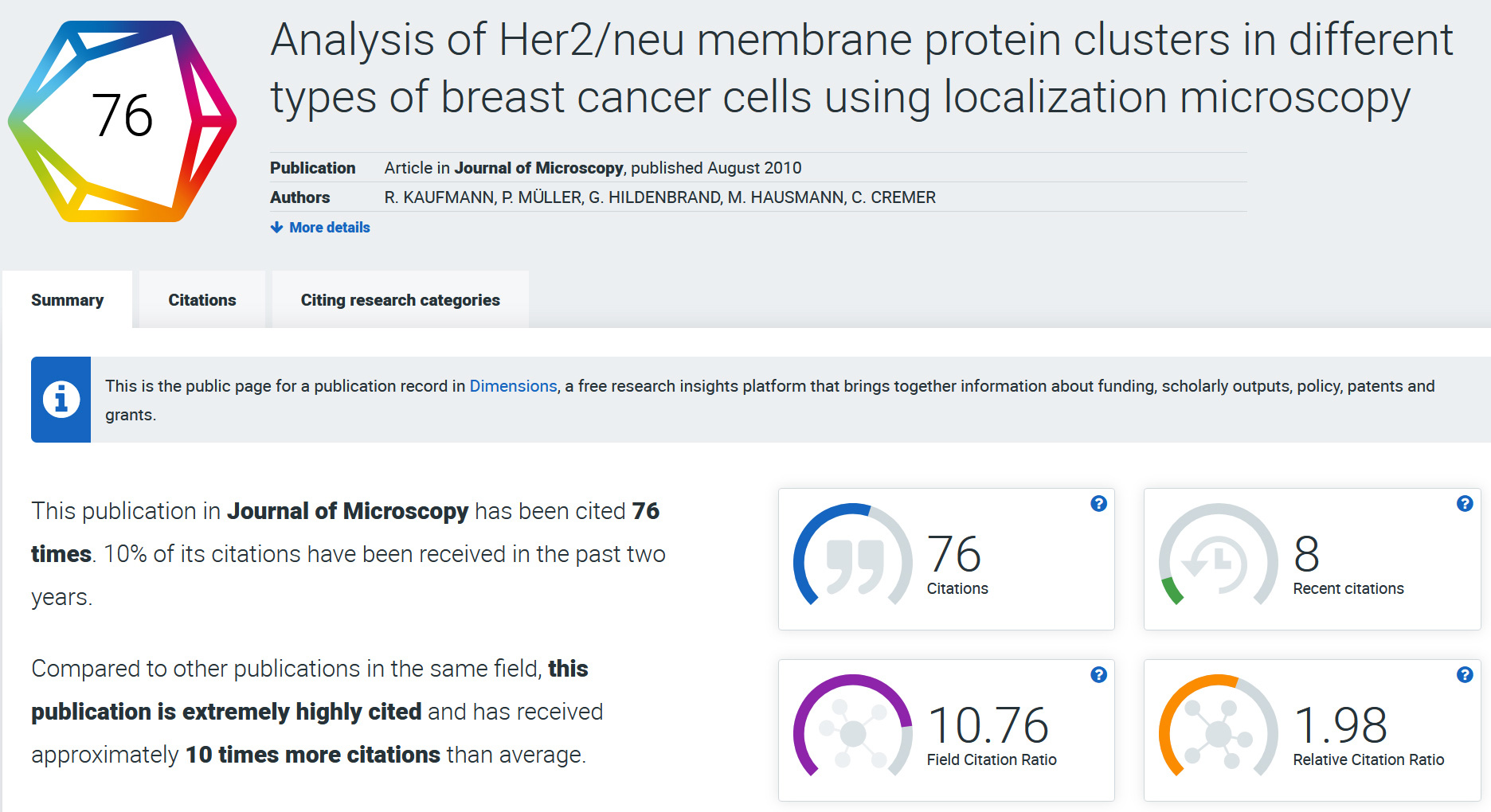

There are some metrics that are at least better proxies for the “impact” of a paper. One of them is to look at how often an individual paper is cited by other papers. This reflects much better the interest in the published work by the scientific community than the JIF. I would argue that a paper that is highly cited by others and published in a smaller specialized journal even more reflects the “high impact” of the work than a paper that gets high attention maybe manly because it is published in a prestigious journal with a high IF. The citations of a paper is a number that can be looked up almost as quickly as the JIF. Of course, if not provided, you would have to do this for every paper if you are for example in the role of trying to evaluate the output of another researcher. Going through the publication list and looking for signs of “CNS” is still much quicker. There are however tools like Dimensions, which can for example be integrated into the website listing a researcher’s publications to show directly scores like citations of a paper and how this number compares to the average in the field.

Example output from Dimension.ai for the publication form my PhD time that I mentioned in the video. The scores give a quick overview of citations of an article an how this compares to the average in the same field.

Another metric that I find useful is the Altmetric score. It focuses on trying to measure the impact of a paper outside of scientific publications. This includes for example the attention that the paper creates in social media networks, but also in news outlets, blogs, Wikipedia etc. The Altmetric score reflects both, the attention that it creates within the scientific field (e.g. through the number of Tweets in the scientific Twitter world), but also the attention outside, in the real world. Consequently, a paper that covers a topic that is of interest to a very broad audience has the potential of creating a very high Altmetric score. This is something that needs to be taken into account when comparing Altmetric scores. For example, the Altmetrics scores that I get for my papers basically reflect the attention within the world of microscopy people. Papers that my wife publishes on topics such as how your lifestyle affects the chances of developing Dementia when you get older achieve Altmetric scores that are orders of magnitudes higher than mine. This also shows that the Altmetric score allows to give an indication of how much impact a paper has for the society. As with any metric, one also has to be careful to use the numbers not blindly. Many basic research is very important as a basis for subsequent more applied research, but may sound quite boring to the wider public in the first instance.

The alternative question was: Should we not get away from numbers that we calculate to compare each other? Here, the ‘San Francisco Declaration on Research Assessment’ (DORA) is an important step in the right direction. The aim of DORA is to establish more suitable criteria to assess scientific quality and research output. One of its central points is to avoid ill proxies such as the JIF and focus more on the content and the “real” impact of individual papers. Impact is difficult to measure and not the same as attention. How much impact someone’s research creates requires looking at the long term effects: How is it picked up by other researchers in the field? How does it transpire to other fields? What are the benefits to society? Coming back to the comparison above of a microscopy paper and a lifestyle and Dementia paper: Dependent on in which field you are working and how close this field is to direct applications and consequences in society, an assessment of the “real” impact can in one case maybe be made based on one publication or, in another case, might take many years. Going away from numbers that we can calculate quick and easily also means more work for someone in the position of trying to evaluate the work of another researcher.

At the time of writing, 21,810 individuals and organizations world-wide have signed DORA. I haven’t done a proper assessment, but it feels like most universities in the UK and a lot in the US have signed the agreement. When I checked how it looks for Germany, I counted 3(!) universities. At least the DFG (German Research Council) and the Volkswagen-Foundation have singed. My hope for the scientific community in Germany is that this encourages more universities to also sign the agreement and implement changes in how they assess researchers. Changes will only happen if the institutions will start to actively contribute to the aim of a fairer research environment. In an article about improving the research culture of an institution (‘Setting the right tone’), Tanita Casci and Elizabeth Adams from the University of Glasgow make this point quite clear: “Success will not come from issuing policies […]. Even if university policies are read, they will be forgotten unless the principles are embedded in standard practice. And if we are not serious about our practices, then we are not credible about our intentions”.

In the individual episodes and blog entries, I will share my experiences and discuss different topics, which are concerning us in our everyday lives as scientists. Join the discussion, share your opinions and help to solve issues in our scientific culture.